Lead Product Designer

A 0→1 internal tool for a $1B hedge fund in NYC — replacing a fragmented, manual research workflow with a structured, AI-assisted interface built for speed, accuracy, and trust.

The tool shipped as a core part of the fund's pre-meeting workflow. Analysts stopped treating it as optional after the first week.

Reduction in earnings call prep time from 60 to 5 mins

The tools exist. The data exists. The AI exists. But none of it talks to each other — and the analyst pays the cost every time.

Analysts juggle AI assistants, OneNote, research docs, and manually fetched data files, all open, none connected. Every insight requires a context switch. Every data point requires manual retrieval. The workflow was never designed as a system.

Opportunity

Unify the research workflow into a single surface, eliminating manual data fetching and context switching without disrupting existing analytical habits.

Existing AI tools produce shallow, unverifiable outputs with no source traceability. Bringing an AI-generated insight into a live meeting is a credibility risk, so analysts revert to manual verification every time, defeating the purpose entirely.

Opportunity

Design an AI interface that earns trust through transparency, cited outputs, editable results, and analyst control at every step.

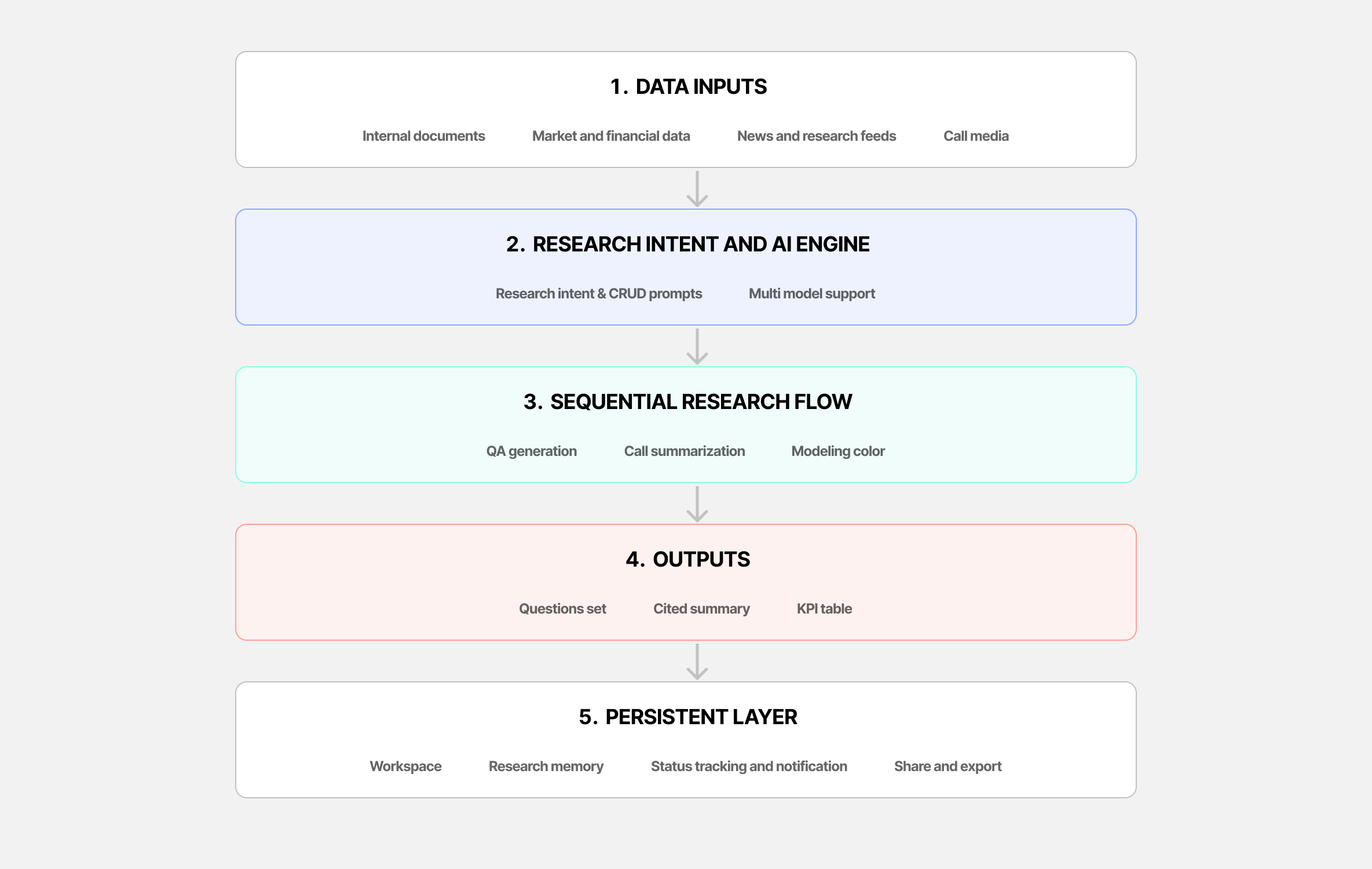

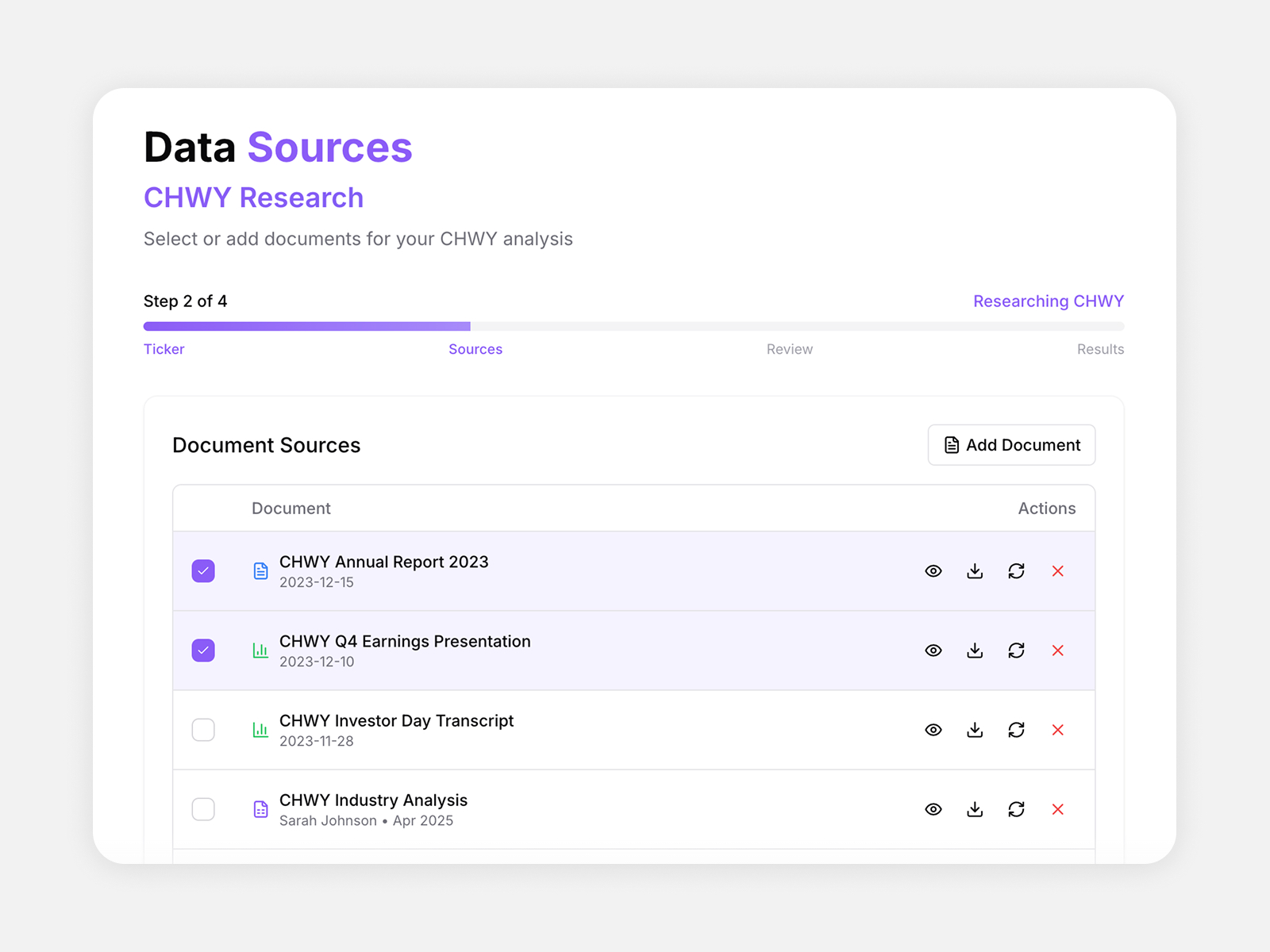

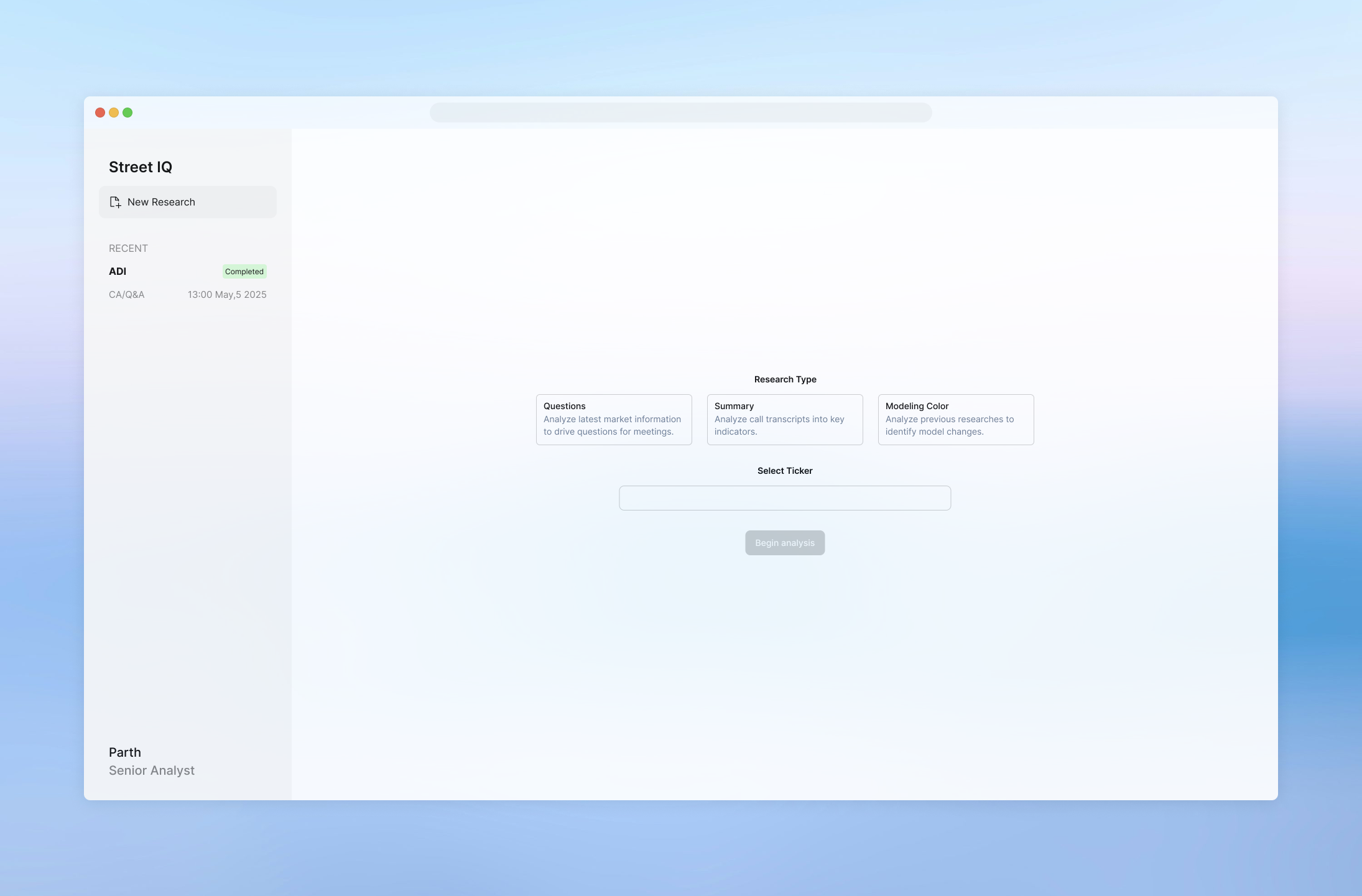

What I did: Designed the entire IA and user flows from scratch, including multi-source data fetching, OneNote sync, source organization, and a sidebar research memory system showing live research status.

Why: With data coming from multiple disconnected sources, the architecture itself was the core UX problem. Without a clear organizational model, every other design decision would collapse.

Result: Analysts had a single, organized workspace where all sources, statuses, and outputs were visible and connected without manual reconciliation.

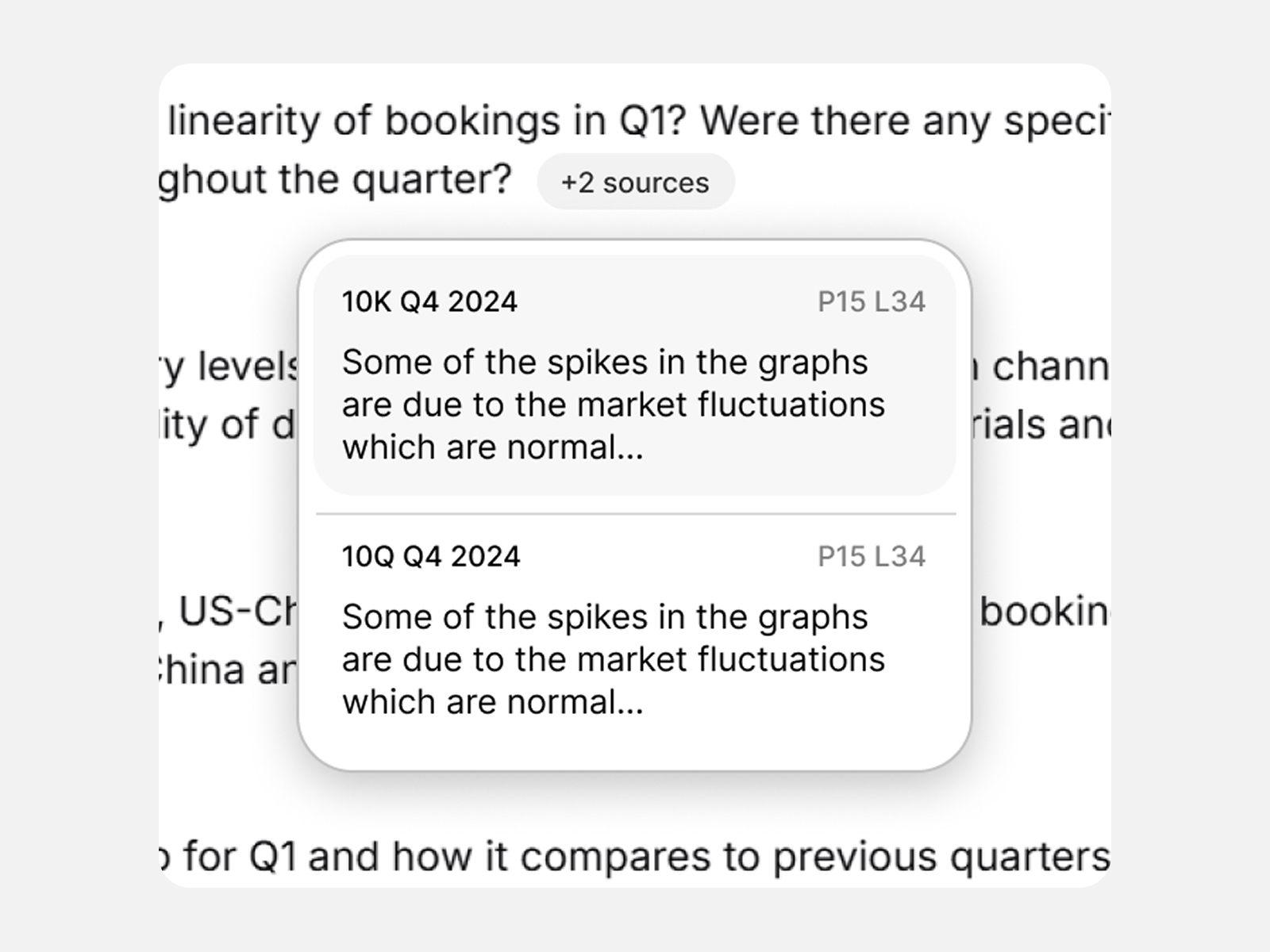

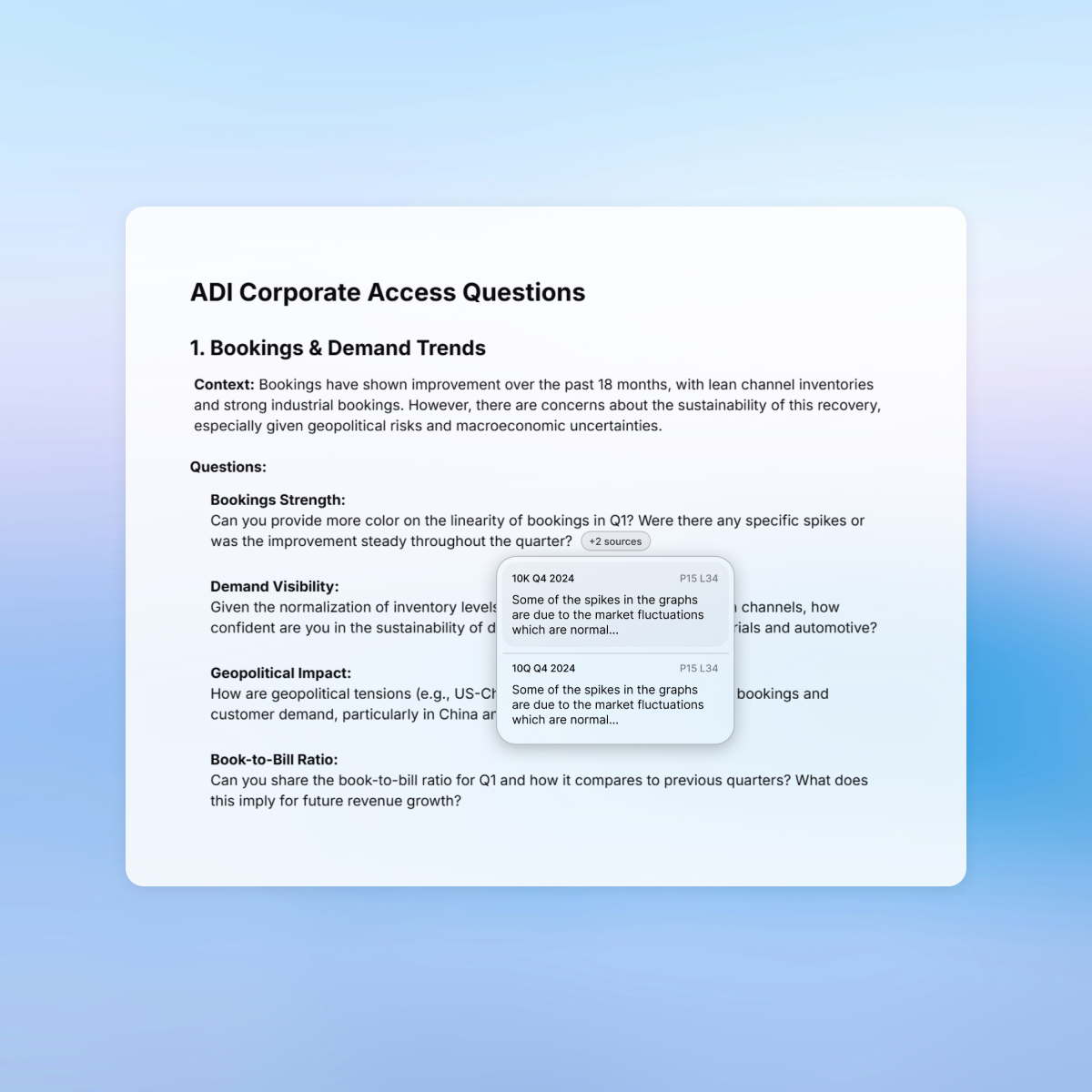

What I did: Designed every AI output to be traceable to its exact source, down to the specific line and page of the original document.

Why: Discovered through stakeholder calls that analysts could not use any insight they couldn't verify. Trust wasn't a nice-to-have, it was the adoption barrier.

Result: Analysts brought AI-generated insights into live meetings with confidence for the first time.

What I did: Between two versions I designed, chose the one with the simpler interaction model, reducing the number of actions required per research session.

Why: Observed that analysts weren't making frequent discrete calls to the tool. A lighter interaction model matched actual usage patterns better than a feature-rich one.

Result: Lower cognitive load during high-pressure prep without sacrificing output quality.

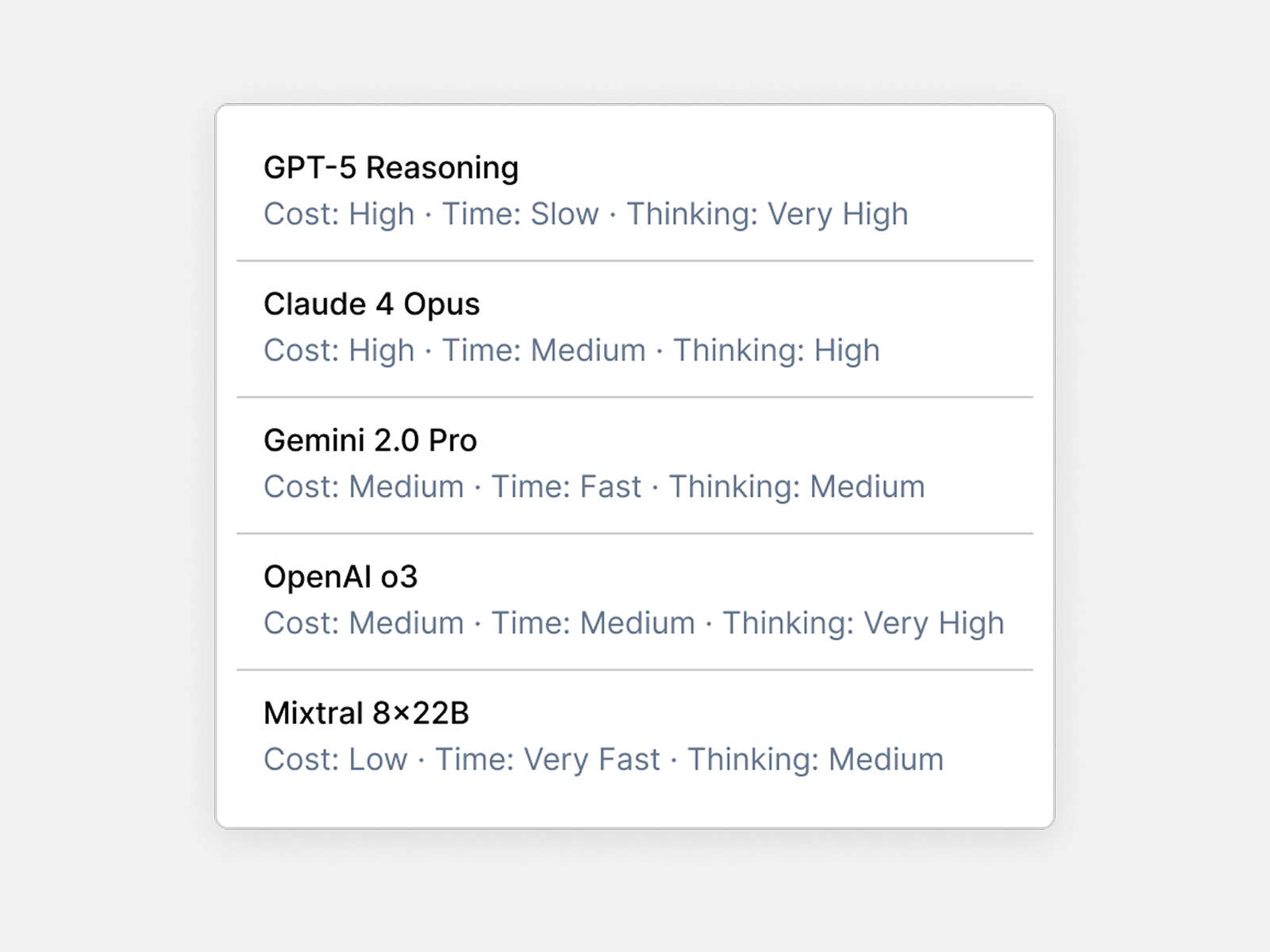

What I did: Designed the tool to support multiple AI models, allowing analysts to switch models and regenerate results within the same research session.

Why: Different analysts trusted different models. Locking to one model would have created adoption resistance and excluded valid working preferences.

Result: Broader team adoption and flexibility to evaluate outputs across models, with comparison capability on the roadmap.

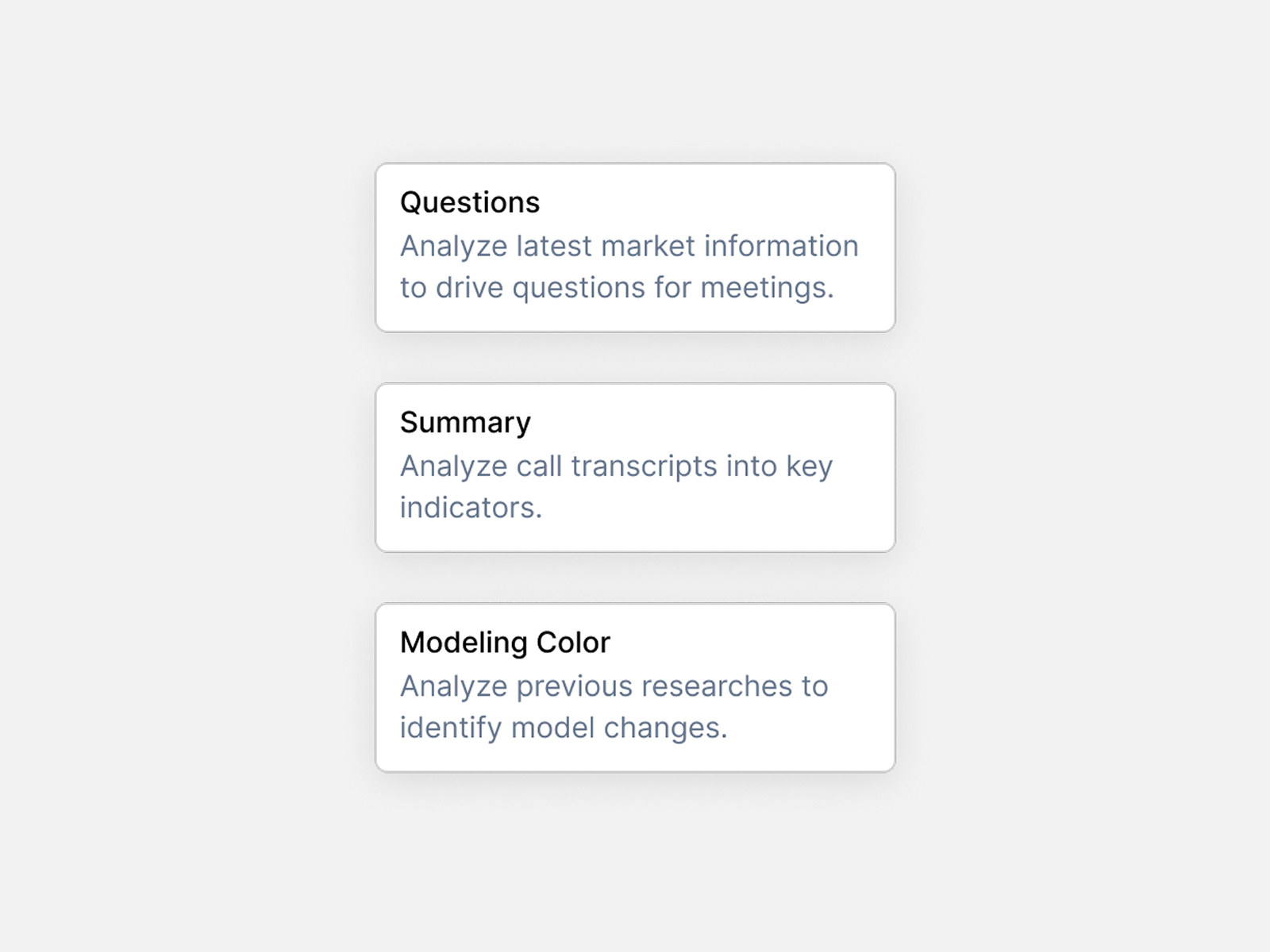

What I did: Designed a research intent layer where analysts select and define their intent before the AI generates anything. Prompts are stored, editable, and reusable across sessions.

Why: Without a defined intent, AI outputs were generic. Giving analysts control over the prompt layer made outputs more precise and reduced post-generation editing significantly.

Result: Analysts fine-tuned prompts over time, creating a personalized research workflow that improved with use.

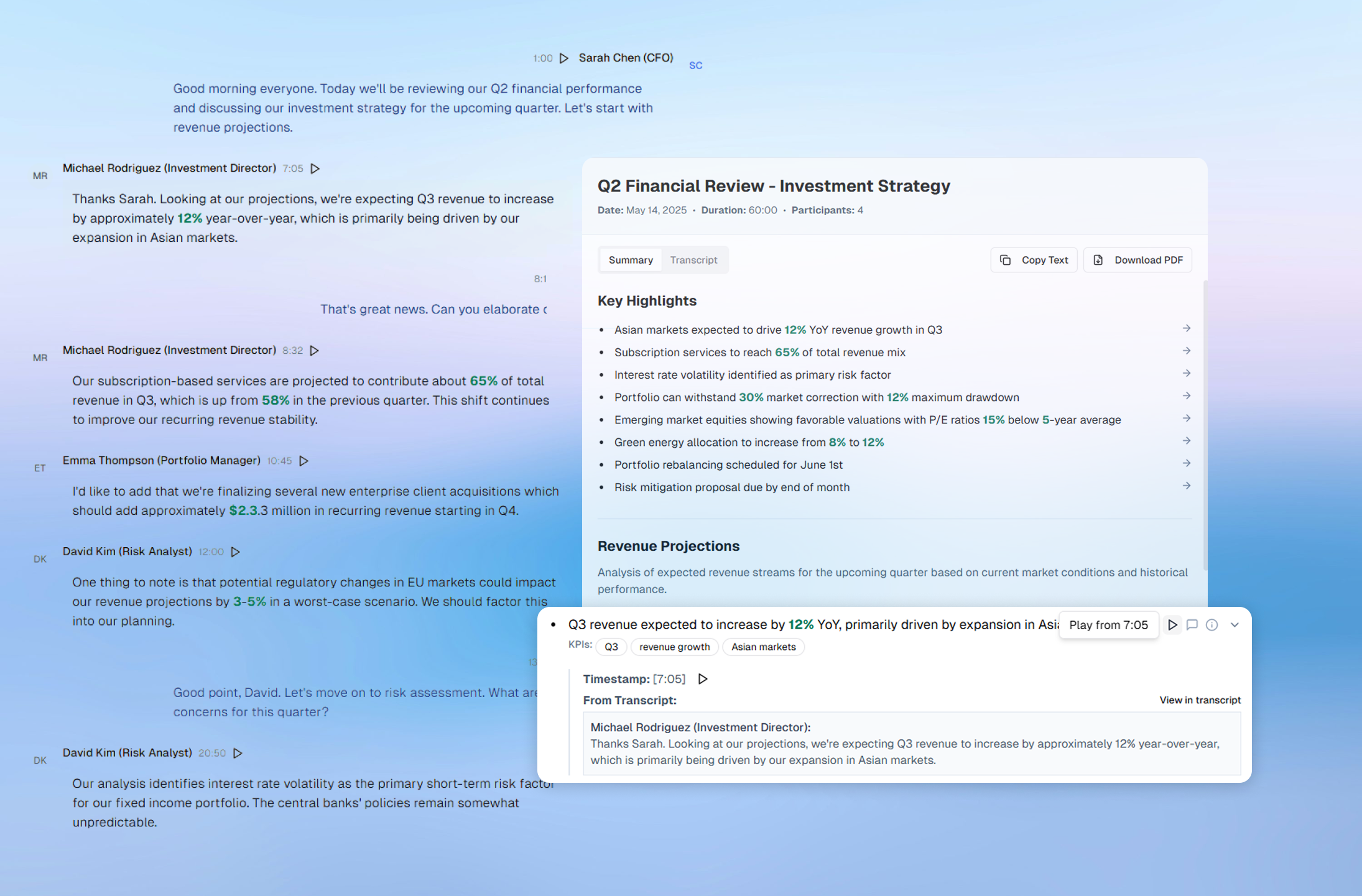

A modular web tool where hedge fund analysts can upload earnings calls, instantly extract KPIs, surface key quotes, and generate custom follow-up questions — all with traceable sources and editable outputs. The system fits directly into existing workflows, reducing prep time from hours to minutes and standardizing analysis across teams.

AI-generated follow-up questions based on KPI movement, soft guidance, or missing context. Each question is editable and backed by the exact quote or metric that triggered it.

Win: Analysts adopted 80% of AI-generated questions into live meetings — cutting prep time while maintaining precision.

The tool produces concise summaries of earnings and corporate access calls, segmented by topic and supported with direct citations. Every insight links back to a quote or metric — so nothing is taken at face value.

Win: Analysts skipped 60+ page transcripts and trusted the assistant as a starting point for post-call debriefs.

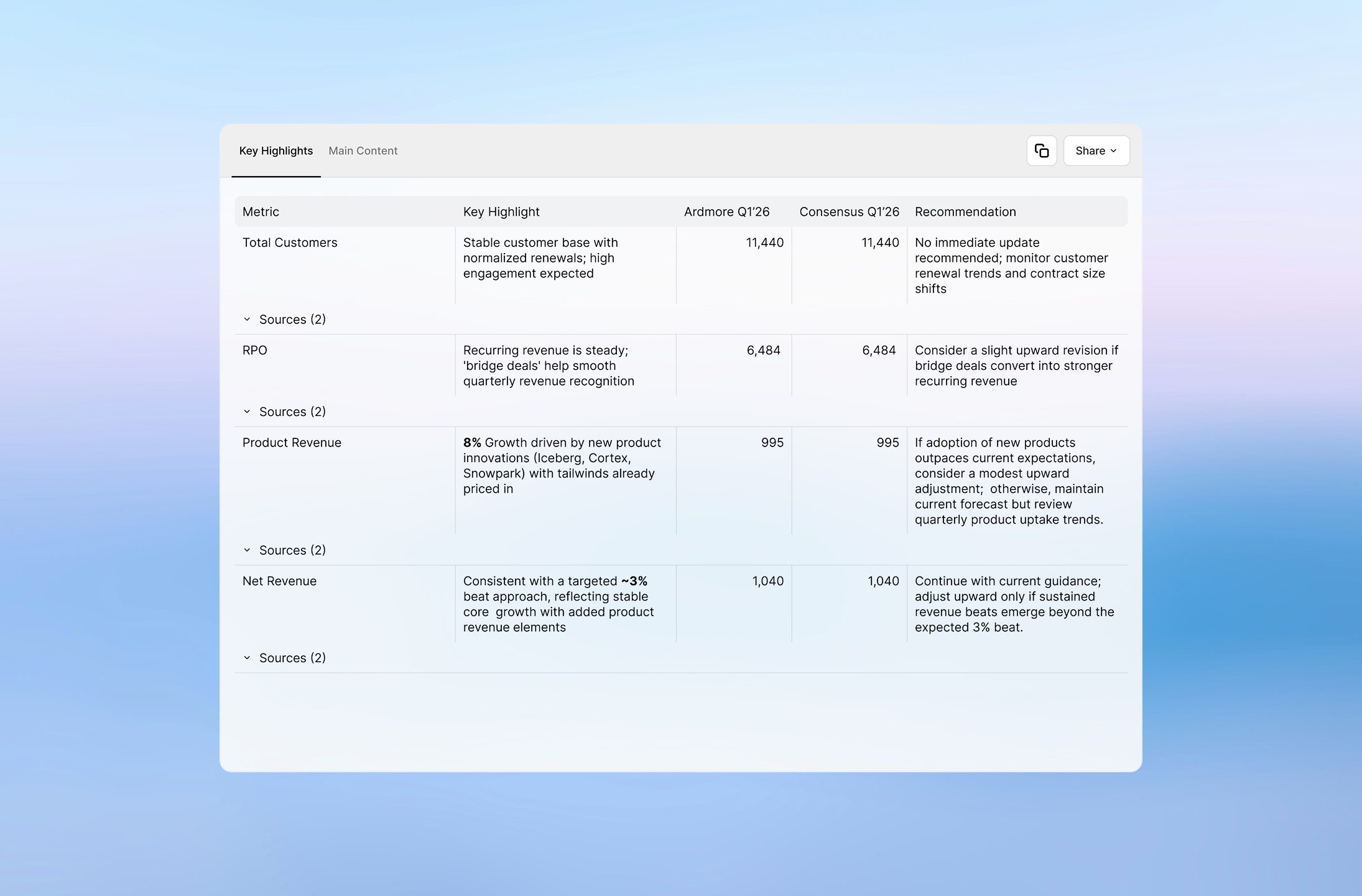

Extracted financials auto-populate a table that shows quarter-over-quarter changes with visual highlights. Analysts can edit values and trace them back to original statements instantly.

Win: Updating models took minutes instead of hours — with full control, context, and exportability.